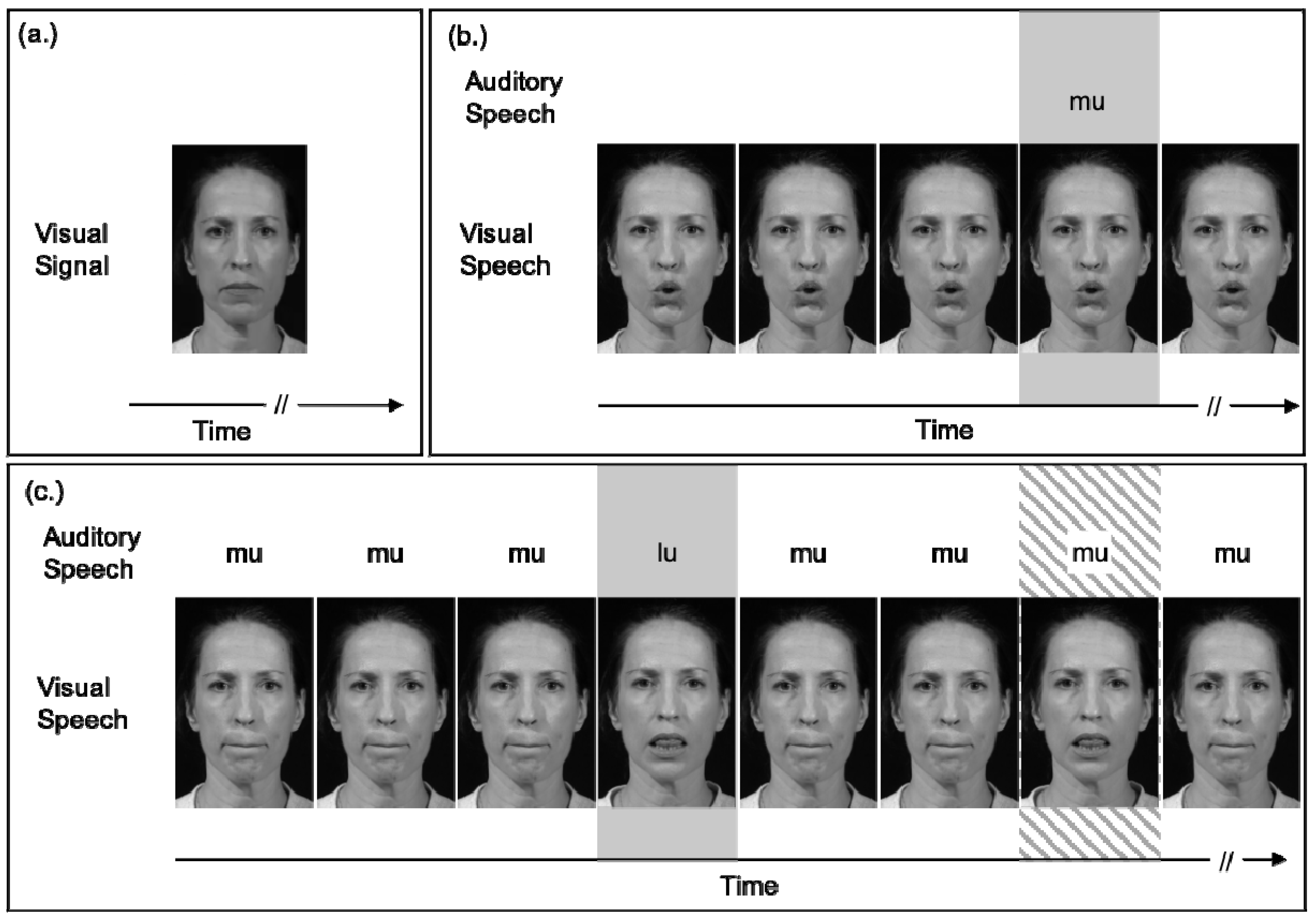

Insight into the influence of multisensory experiences, such as musical training, is only beginning to unfold ( Petrini et al., 2009a, b, 2011 Lee and Noppeney, 2011, 2014 Paraskevopoulos et al., 2012 Behne et al., 2013 Proverbio et al., 2016 Jicol et al., 2018), and how this regulates audiovisual modulation in speech is yet to be understood.īehavioral research on audiovisual (AV) speech has shown that visual cues from mouth, jaw and lip movements that start before the onset of a corresponding audio signal can facilitate reaction time and intelligibility in speech perception, compared with perception of the corresponding audio only (AO) condition ( Schwartz et al., 2004 Paris et al., 2013). This prediction by phonetically congruent visual information can modulate early processing of the audio signal ( Stekelenburg and Vroomen, 2007 Arnal et al., 2009 Pilling, 2009 Baart et al., 2014 Hsu et al., 2016 Paris et al., 2016a, b, 2017). Further research added that the visual information from facial articulations, which begins before the sound onset, can also work as a visual cue that leads the perceiver to form some prediction about the upcoming speech sound. (2005) showed that this audiovisual information facilitated perception. Perception is shaped by information coming to multiple sensory systems, such as information from hearing speech and seeing a talker's face coming through the auditory and visual pathways. Collectively, the current findings indicate that early sensory processing can be modified by musical experience, which in turn can explain some of the variations in previous AV speech perception research. However, musicians showed significant suppression of N1 amplitude and desynchronization in the alpha band in audiovisual speech, not present for non-musicians. Moreover, they also showed lower ITPCs in the delta, theta, and beta bands in audiovisual speech perception. With the predictory effect of mouth movement isolated from the AV speech (AV−VO), results showed that, compared to audio speech, both groups have a lower N1 latency and P2 amplitude and latency. Musicians and non-musicians are presented the syllable, /ba/ in audio only (AO), video only (VO), and audiovisual (AV) conditions. The current study addresses whether audiovisual speech perception is affected by musical training, first assessing N1 and P2 event-related potentials (ERPs) and in addition, inter-trial phase coherence (ITPC). Whether audiovisual experience, such as with musical training, influences this prediction is unclear, but if so, may explain some of the variations observed in previous research.

This prediction from phonetically congruent visual information modulates audiovisual speech perception and leads to a decrease in N1 and P2 amplitudes and latencies compared to the perception of audio speech alone. In audiovisual speech perception, visual information from a talker's face during mouth articulation is available before the onset of the corresponding audio speech, and thereby allows the perceiver to use visual information to predict the upcoming audio. Department of Psychology, Norwegian University of Science and Technology, Trondheim, Norway.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed